Claude vs. Codex vs. Human Dev: A Reality Check

TL;DR:

I pitted Claude Sonnet vs. Codex GPT 5.3 to build an Angular + Spring Boot app from scratch in March 2026. Here’s what happened:

✅ Prototyping win: Both LLMs generated boilerplate in 20–30 minutes—faster than manual setup.

⚠️ Reality check: Neither produced working code out of the box.

- Codex struggled with Spring AI compatibility but had a better backend structure (feature-based).

- Claude ignored Java 25, used outdated Angular 19, but delivered a more polished UI.

- Both defaulted to outdated dependencies (Spring Boot 3.x, Angular 18) and security flaws (hardcoded passwords, lax REST methods).

🔍 Key takeaways:

- LLMs are assistants, not replacements. Senior review is mandatory for production.

- Explicit > implicit: Specify every version (Java, Spring Boot, Angular) or risk technical debt.

- Frontend > backend quality: Modern frameworks (Angular) yield better results than legacy Java patterns.

- Security is DIY: LLMs won’t flag hardcoded passwords or CVEs—you must audit everything.

💰 Worth it? $20/month saves time on boilerplate, but review overhead can offset gains in regulated industries.

I have to admit I’m in awe of the Twitter/X developers who proudly announce they don’t write or review LLM-generated code—they just manage 10, 20, or 100 agents.

Meanwhile, I use LLMs for what they’re good at: bug fixing and wrestling with CSS.

For everything else, I still roll up my sleeves.

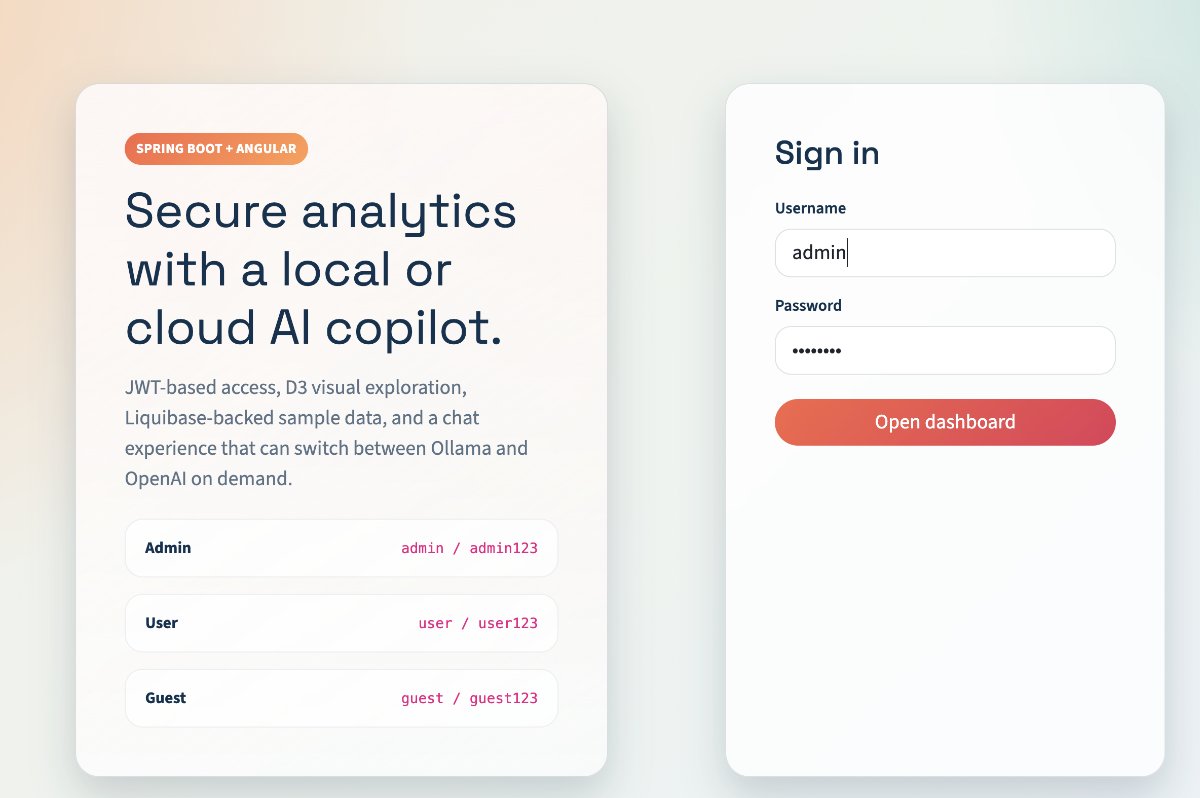

With some free time, I decided to test how these "magical" tools handle a greenfield project.

For existing projects with well-defined structures, they add features smoothly. But for something new? Let’s just say the results were educational.

The Challenge

Date: March 2026

Contenders: Claude Sonnet vs. Codex GPT 5.3

Task: Build a simple Angular + Spring Boot application with a database and role-based access.

Referee: Mistral AI (for neutral specs)

Rules: No custom instructions, no hand-holding—just raw, unfiltered LLM output.

You can find the specification used at the end of the post.

Building and Executing the Application

Both LLMs took 20–30 minutes to generate the code. The build failed immediately.

- Claude struggled with Angular syntax and database naming conventions.

- Codex had fewer issues but still hit snags with Spring AI compatibility.

- Verdict: Codex was smoother, but neither LLM produced a working app out of the box.

Versioning: A Dumpster Fire

Java 25

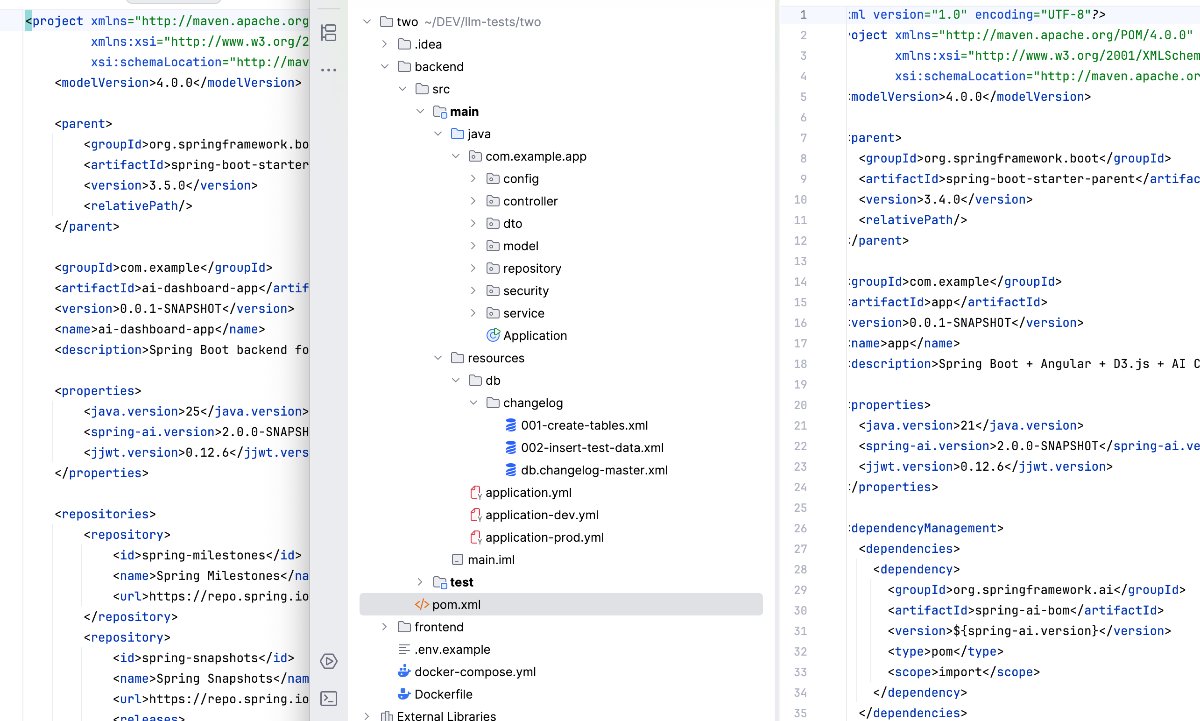

- Requested: Java 25

- Codex: Followed instructions.

- Claude: Ignored the request entirely. Bad LLM.

Spring Boot

- Requested: Latest release (4.0.3 as of March 2026)

- Codex: 3.5.0

- Claude: 3.4.0

- Reality: Both used outdated, unpatched versions—a security risk.

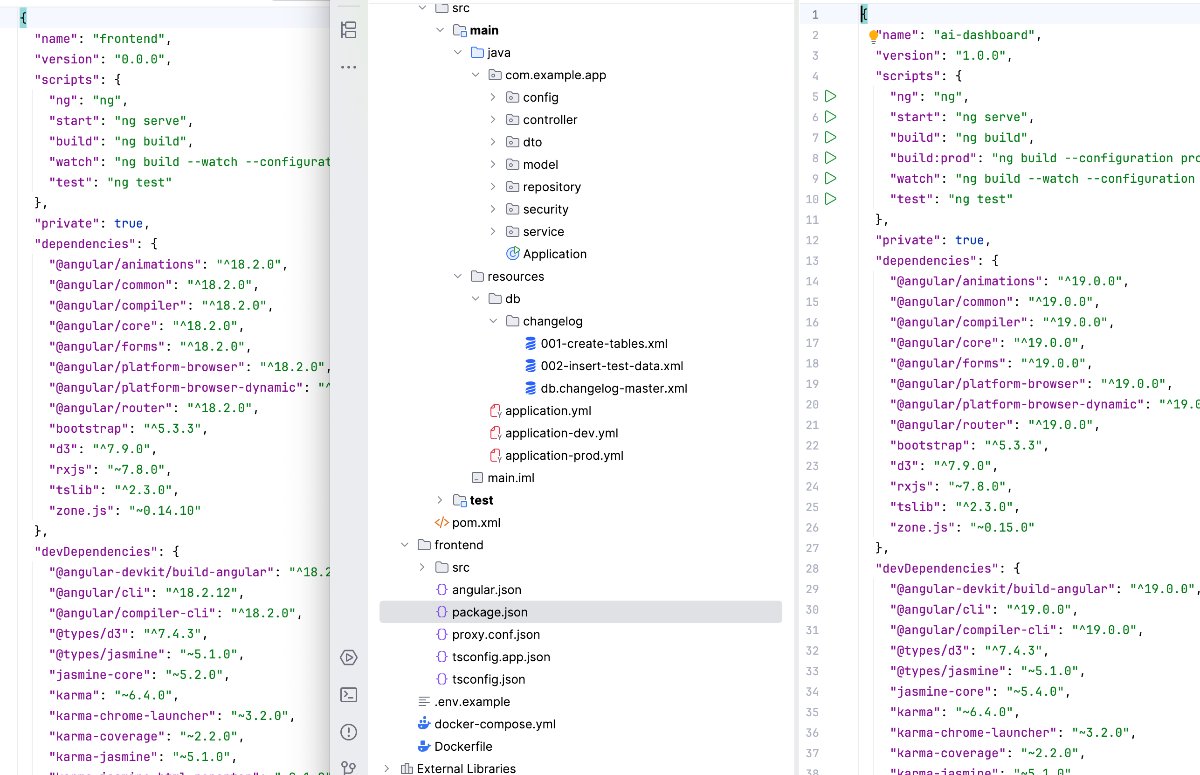

Angular

- Requested: Angular 21

- Codex: Angular 18 (with deprecated syntax like

*ngIf) - Claude: Angular 19 (using modern

@if) - At least they used standalone components.

Spring AI: The Biggest Pain Point

- Requested: Spring AI 2 (SNAPSHOT, but compatible with Spring Boot 4)

- Claude: Downgraded to Spring AI 1.0.0-M6 (a pre-release, not production-ready).

- Codex: Settled on Spring AI 1.0.3 (stable but outdated).

- Result: 20+ minutes wasted on dependency hell.

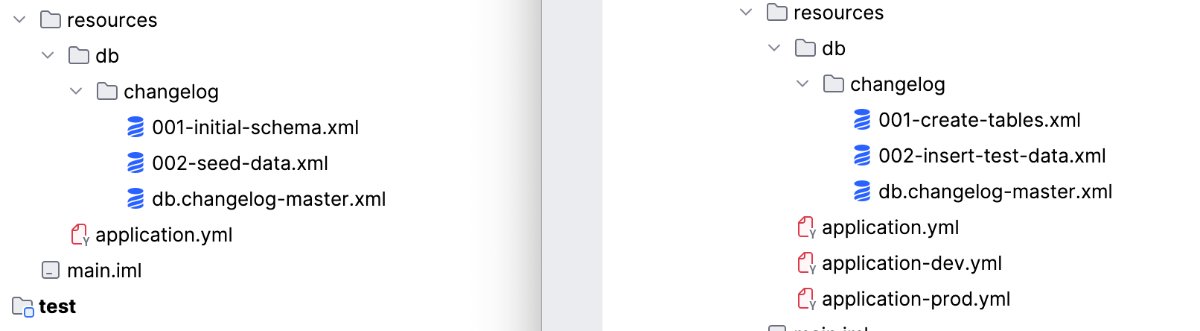

On the left you have Codex config, on the right Claude:

The POM for the backend:

Angular package.json:

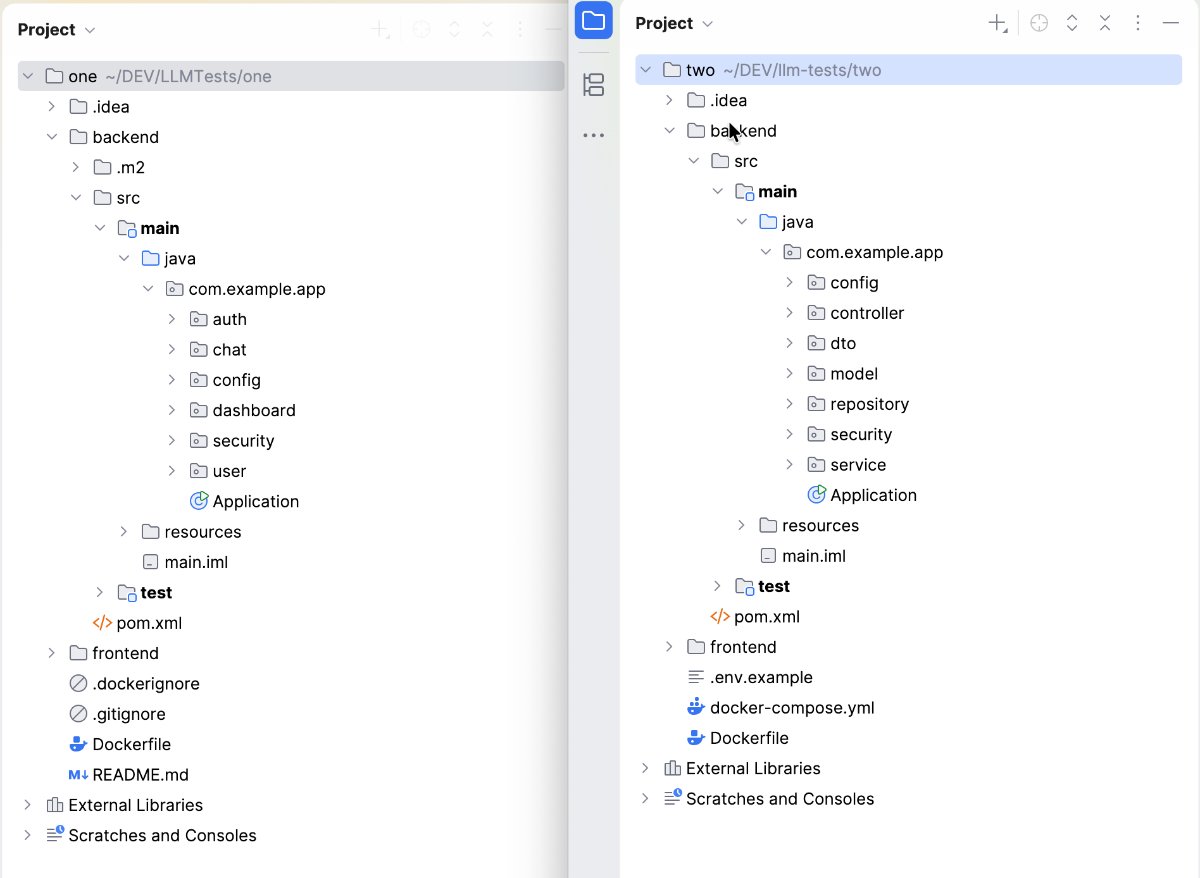

Code Structure: Codex Wins (Mostly)

- Codex: Used a feature-based structure (e.g.,

user/,order/). This aligns with professional projects. - Claude: Opted for "Clean Architecture" (package by function). Not scalable for large projects.

Claude, on the right, doesn't care about domains. No instruction was given (no need to complain).

Configuration: Claude Did Better

- Format: Both used YAML (I’d have preferred

.properties, but it wasn’t specified). - Claude: Created three

application.ymlfiles (dev, prod, generic). - Codex: Dumped everything into one file.

- Frontend: Claude used a proxy; Codex relied on environment variables. Different approaches, same result.

Code Quality: A Mixed Bag

Backend

- Claude: Used

@Autowired(unacceptable even for juniors). - Both: Used

@Value(discouraged by the Spring team). - Both: Avoided exposing entities (used DTOs/records). Good practice, surprisingly.

Frontend

- Codex: Used Angular

signals(modern). - Claude: Stuck to older patterns.

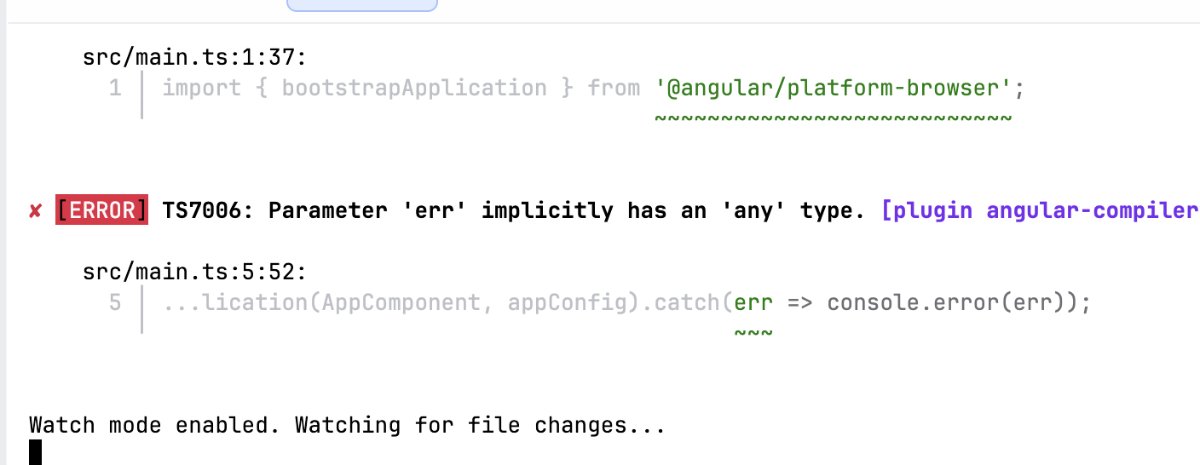

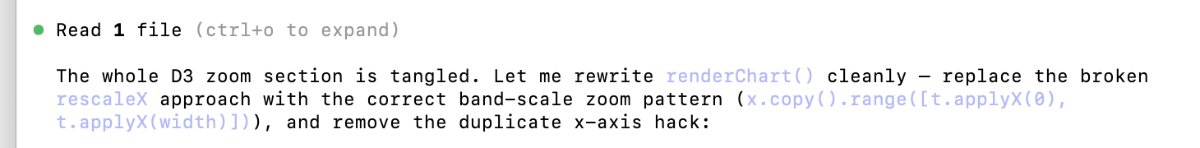

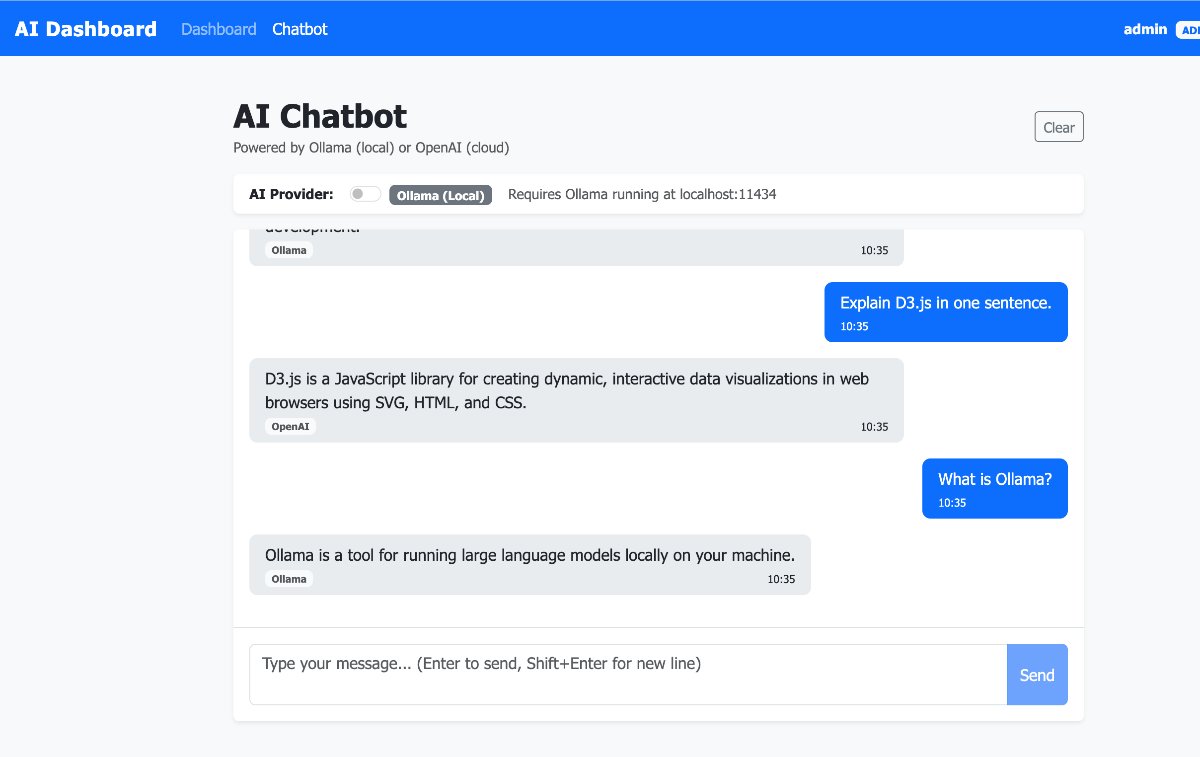

Claude had more difficulties with standard Angular and D3js, it had a lot of pain with its own code:

- it cannot build it

- it understood that it did a mess

Security: Basic but Flawed

- Both: Implemented Spring Security correctly (basic auth, roles).

- Codex: Allowed all REST methods (

*). - Claude: Restricted to

GET, POST, PUT, DELETE, OPTIONS.- Risk: If you use

PATCH, Claude’s setup might break.

- Risk: If you use

Tests: Minimalist (and Useless)

- Both: Created basic, similar tests.

- Codex: Left an empty test package.

- Verdict: Not production-ready.

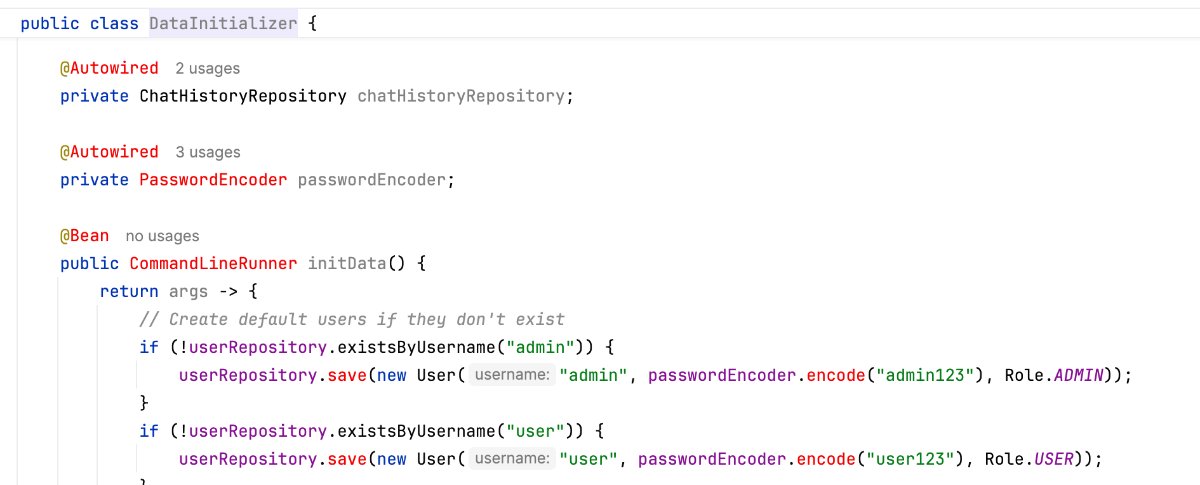

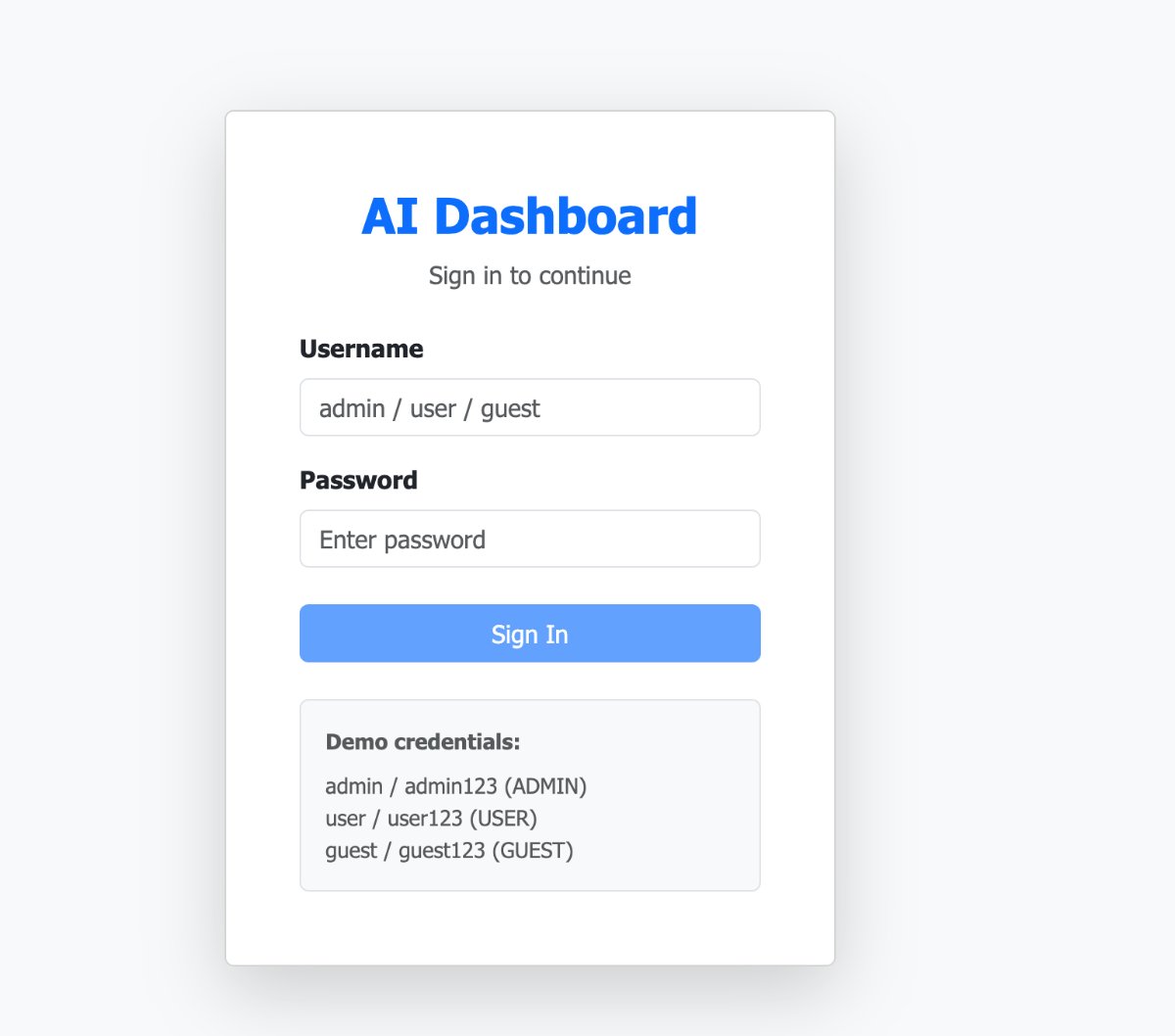

Database: Hardcoded Passwords? Really?

- Claude: Initialized records directly in the code, including hardcoded passwords.

- Excuse: "Password encoding required it."

- Reality: Security 101 violation.

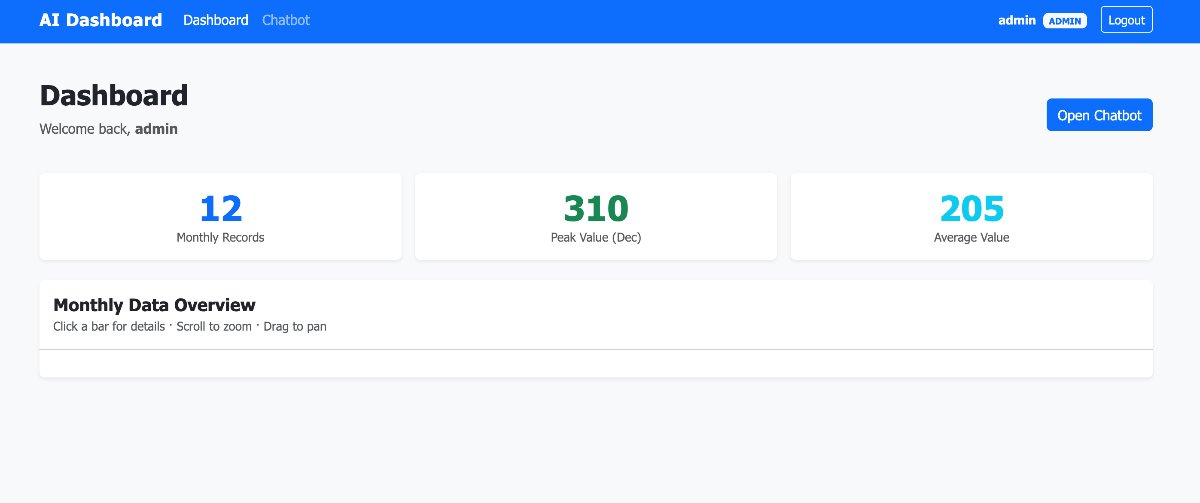

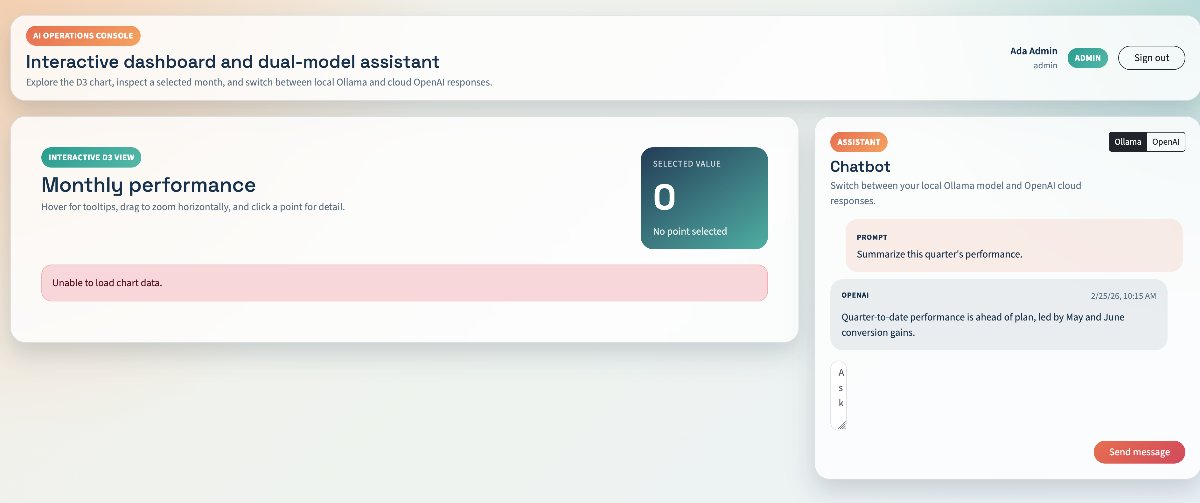

UI: Subjective, but Claude Wins

- Claude: More polished, professional.

- Codex: Felt like a basic prototype.

- Note: This is subjective, but Claude’s UI was cleaner in past projects too.

Here Claude, professional:

Here Codex, from the beach on holiday:

Verdict: LLMs Are Assistants, Not Replacements

The Good

- Prototyping: LLMs speed up initial setup and boilerplate.

- Bug Fixing: They’re great for debugging and CSS tweaks.

- Cost: $20/month is worth it for rapid iteration.

The Bad

- Versioning: They default to outdated dependencies unless explicitly told otherwise.

- Quality: Working ≠ production-ready. Senior devs must review every line.

- Security: Hardcoded credentials and lax REST methods are red flags.

The Ugly

- Left unsupervised, they produce "working garbage."

- Codex’s structure was better for large projects.

- Claude’s UI was more professional.

Lessons Learned

-

Be Explicit:

- Specify versions, dependencies, and patterns upfront.

- Example: "Use Java 25, Spring Boot 4.0.3, and Angular 21."

-

Review Everything:

- LLMs hallucinate dependencies and ignore best practices.

- Critical environments (finance, health): LLM-generated code must be manually verified—no exceptions. The cost of a bug isn’t just debugging time; it’s compliance violations, audits, or worse.

-

Frontend vs. Backend Quality:

- Frontend code tends to be higher quality than backend. Likely reason: modern frontend frameworks (Angular, React, Vue) have consistent, opinionated patterns, while backend Java code online is a graveyard of legacy architectures, deprecated practices, and "enterprise" anti-patterns from the last 20 years.

- Result: LLMs regurgitate old Java habits (

@Autowired, XML configs, monolithic structures) unless explicitly forbidden.

-

Build Your Team:

- Claude: Frontend developer. Better at UI/UX, modern Angular, and standalone components.

- Codex: Backend developer. Better at structuring features, Spring Boot, and avoiding spaghetti code.

- Next in line: Mistral and Alibaba models—benchmarks coming soon.

-

Skills and Dedicated Agents Improve Quality (At a Cost):

- Custom skills (e.g., "Use DTOs, avoid

@Autowired") reduce garbage output. - Dedicated agents (e.g., one for backend, one for frontend) improve focus but add complexity.

- Trade-off: Better code, but now you’re managing an LLM orchestra instead of writing it yourself.

- Custom skills (e.g., "Use DTOs, avoid

-

Technical Debt Disaster

Claude and Codex imported a lot of old libraries and frameworks, even if the precise version was clearly defined in the prompt. When they were blocked on some issues, simply downgraded the versions.

- If you are in a professional environment this would flag tons of CVEs alarms in your application. The best case would be the deployment blocked, the worst an escalation for security risks.

- Not using the latest version means that you will have to upgrade yourself or ask for a migration. If you work on serious projects you know how costly is and how many surprises you will encounter.

- Price vs. Value:

- $20/month is justified if it saves hours of boilerplate. In regulated industries, factor in review overhead—the "savings" might evaporate.

- At the moment Codex offers more tokens (per dollar) than Claude, I tend to use Codex for more tasks, and I limit Claude to complicated UI interfaces. With Claude (even with the 'cheap' Sonnet, we hit the limits quickly).

Now, back to writing actual code.

--

Specification used

Specification created by Mistral AI

Here’s the **final, actionable specification** for your AI to build the application, incorporating all your requirements:

## **Final AI Specification: Spring Boot + Angular App with D3.js and Ollama Chatbot**

### **1. Overview**

- **Purpose**: Professional web app with role-based access, an **interactive D3.js dashboard**, and a **chatbot** (Ollama for local LLM, OpenAI for cloud).

- **Tech Stack**:

- **Backend**: Spring Boot (latest), Java 25, Spring Data JPA, Hibernate, REST API, **Spring AI 2.0 (SNAPSHOT)**.

- **Frontend**: Angular (latest), Bootstrap, **D3.js** (interactive chart).

- **Database**: H2 (dev), PostgreSQL (prod), **Liquibase** (for migrations and test data).

- **Security**: JWT (Spring Security defaults).

- **Deployment**: Docker (Angular + Spring Boot; PostgreSQL separate).

### **2. Frontend (Angular)**

#### **Dashboard**

- **Interactive D3.js Chart**:

- Type: Bar/line chart with **tooltips**, **zoom**, and **click events**.

- Data: Fetched from /api/dashboard/chart.

- Example data structure:

json

[

{ "month": "Jan", "value": 100, "details": "Sample data" },

{ "month": "Feb", "value": 150, "details": "Sample data" }

]

- **Responsive**: Bootstrap 5 (mobile-friendly).

#### **Chatbot UI**

- **Features**:

- Text input/output.

- Toggle to switch between **Ollama (local)** and **OpenAI (cloud)**.

- **Conversation history**: Display past messages (fetched from /api/chat/history).

- **Styling**: Bootstrap modal or sidebar.

### **3. Backend (Spring Boot)**

#### **Dependencies**

- Use **Spring Initializr** with:

- Spring Web, Security, Data JPA, H2, PostgreSQL, Liquibase.

- **Spring AI 2.0 SNAPSHOT** (for Ollama/OpenAI integration):

xml

<dependency>

<groupId>org.springframework.ai</groupId>

<artifactId>spring-ai-core</artifactId>

<version>2.0.0-SNAPSHOT</version>

</dependency>

<dependency>

<groupId>org.springframework.ai</groupId>

<artifactId>spring-ai-ollama-spring-boot-starter</artifactId>

<version>2.0.0-SNAPSHOT</version>

</dependency>

<dependency>

<groupId>org.springframework.ai</groupId>

<artifactId>spring-ai-openai-spring-boot-starter</artifactId>

<version>2.0.0-SNAPSHOT</version>

</dependency>

#### **API Endpoints**

| Endpoint | Method | Description |

|------------------------|--------|-----------------------------------------------------------------------------|

| /api/dashboard/chart | GET | Returns JSON data for D3.js chart. |

| /api/chat | POST | Accepts { "prompt": "user input", "useCloud": boolean }. Returns LLM response. |

| /api/chat/history | GET | Returns conversation history for the current user. |

#### **LLM Configuration**

- **Ollama (Local)**:

- Default URL: http://localhost:11434.

- Model: llama3 (or user-specified).

- **OpenAI (Cloud)**:

- API key required (spring.ai.openai.api-key).

- **Fallback**: If no LLM is configured, return:

json

{ "response": "LLM not configured. Please check your settings." }

#### **Database (Liquibase)**

- **Changelog Example**:

xml

<changeSet id="1" author="ai">

<createTable tableName="chat_history">

<column name="id" type="bigint" autoIncrement="true" primaryKey="true"/>

<column name="user_id" type="bigint"/>

<column name="prompt" type="varchar(500)"/>

<column name="response" type="varchar(2000)"/>

<column name="timestamp" type="timestamp"/>

<column name="is_cloud" type="boolean"/>

</createTable>

</changeSet>

### **4. Sample Tests**

#### **Liquibase Test Data**

- Preload H2 with:

- 3 users (admin/user/guest).

- Sample dashboard data.

- Sample chat history.

#### **Spring Boot Tests**

- **Repository Layer**: @DataJpaTest for ChatHistoryRepository.

- **Controller Layer**: @WebMvcTest for ChatController (mock LLM responses).

- **Example Test**:

java

@SpringBootTest

class ChatControllerTest {

@Test

void testChatEndpoint() {

// Mock Ollama/OpenAI response

// Test POST /api/chat

}

}

### **5. Docker Deployment**

#### **App Container**

- **Multi-stage Dockerfile**:

dockerfile

# Stage 1: Build Angular

FROM node:latest AS angular-build

WORKDIR /app

COPY frontend/package*.json ./

RUN npm install

COPY frontend .

RUN npm run build --prod

# Stage 2: Build Spring Boot

FROM openjdk:25-jdk-slim

WORKDIR /app

COPY backend/build/libs/*.jar app.jar

COPY --from=angular-build /app/dist/frontend ./static

EXPOSE 8080

ENTRYPOINT ["java", "-jar", "app.jar"]

- **Environment Variables**:

- SPRING_PROFILES_ACTIVE=prod

- OPENAI_API_KEY (optional, for cloud LLM)

- OLLAMA_BASE_URL=http://host.docker.internal:11434 (for local LLM)

#### **PostgreSQL**

- Separate container (not managed by Docker Compose in prod).

### **6. AI Instructions**

1. **Generate Project**:

- Use Spring Initializr and Angular CLI.

- Add D3.js (npm install d3).

2. **Implement**:

- Interactive D3.js chart with tooltips/zoom.

- Chatbot with Ollama/OpenAI toggle and history.

- Liquibase migrations for all tables.

3. **Dockerize**:

- Build Angular → Copy to Spring Boot static.

- Configure LLM via environment variables.

### **7. Final Notes**

- **Ollama**: Ensure it’s running locally for dev (docker run -p 11434:11434 ollama/ollama).

- **OpenAI**: Requires API key for cloud mode.

- **Conversation History**: Saved to PostgreSQL/H2.