How good is LLM Code? A self-evaluation done by AI

Vibe coding is fun. It is fast, creative, and sometimes genuinely exciting. In just a few hours, an LLM can generate features that would normally take days or weeks to build.

But that is not the real question.

The real question is this: how good is the code once the excitement is over?

Over the last few weeks, I built more than 20 projects with LLMs. Some were created with coding agents, some with agentic workflows, and some with a more direct prompt-driven approach. I enjoyed the experiment a lot. The feeling of coding ten times faster is a bit like driving on the highway for the first time with a cabrio: fun, powerful, and slightly unreal.

Some of these projects ended up with beautiful, modern user interfaces. After just four hours, they already had features that would normally require months of spare-time development. I work on large and complex financial systems, so I would never have imagined building a video editor in a few hours with Java and Angular. The same happened with WebAssembly: I built a small Kotlin/Wasm application without even looking at most of the generated code.

That is the magic part.

Then comes the review.

Fast code, lots of code

At the beginning, I tried to review everything carefully. Then I got bored. And after a few iterations, most of the code had already changed anyway.

To be clear, I used this approach only for pet projects. The goals were simple:

- understand how LLMs are changing our work as developers

- evaluate the quality of the generated code

- see how these tools could affect real-world projects

- build personal tools I always wanted, but never had time to implement

And that is exactly what happened: in a few weeks I created more than 20 projects with a huge amount of code, most of it unread until something started behaving strangely.

Agents, skills, and unreliable employees

Yes, I tried to follow the recommended practices. I created skills, used AGENTS.md and CLAUDE.md, and added project instructions where it made sense.

My “employees” were Claude, Codex, Gemini, and Mistral. A couple of them were clearly more capable than the others, so they got priority on the more important tasks.

What surprised me is how human they sometimes felt, especially in the worst way.

Like a normal employee, an agent can start a large refactoring, do half of it, and then disappear because the token budget is finished. That is a strange way to work with a teammate: they break the code, stop in the middle, and then basically say, “Either pay more or wait a few hours.”

Funny? Yes. Professional? Not really.

So what is the quality of the code?

I will leave the full collection of agent fights for another post. Sometimes they are brilliant. Sometimes they behave worse than a junior developer with too much confidence.

One common pattern is surprisingly consistent: retrieving too much data, then filtering it later in the backend or frontend as if database queries did not exist. Add Hibernate into the picture, and you can quickly get the usual N+1 disasters too.

Modern development

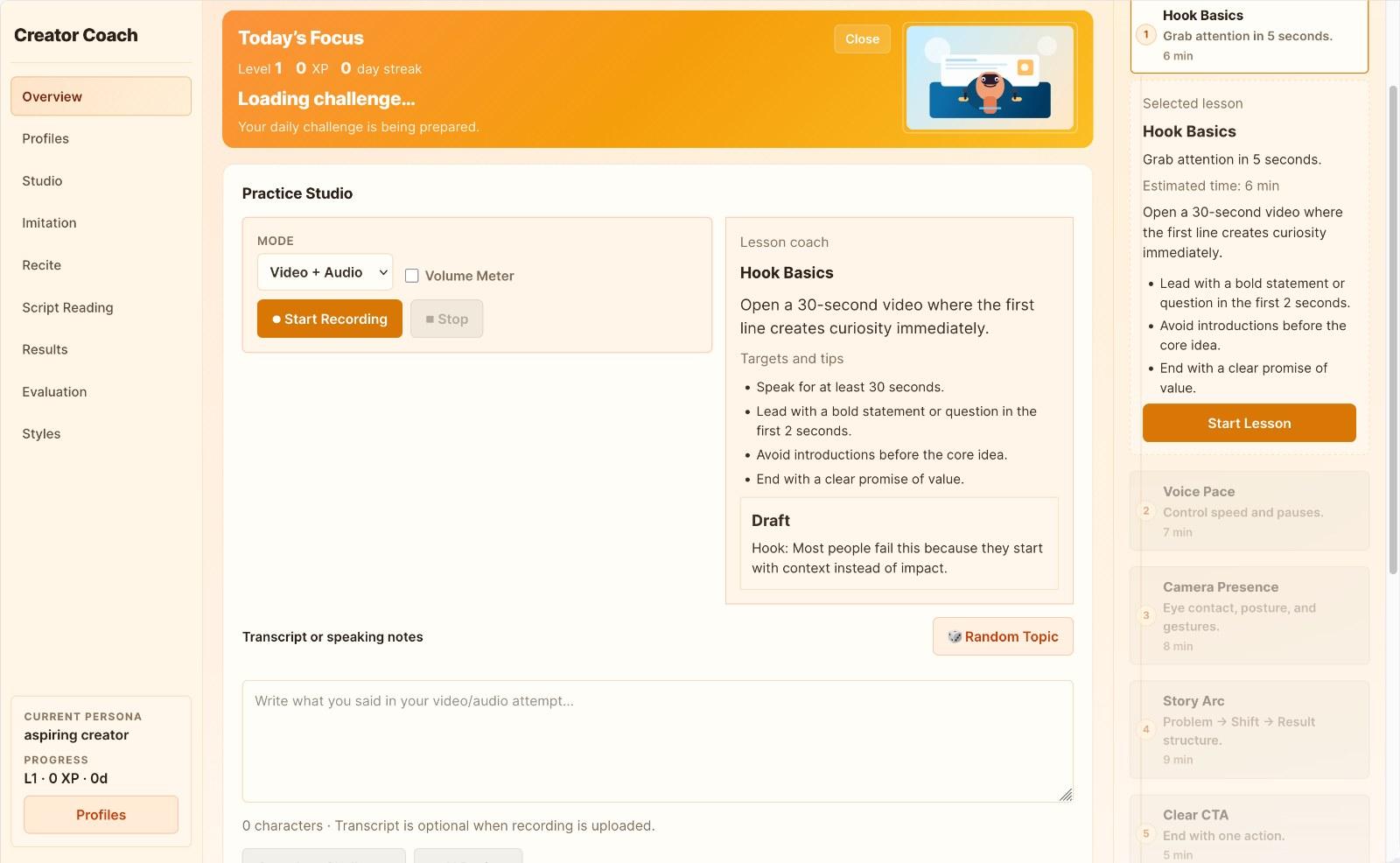

But I want to focus on one project in particular because it was the one I enjoyed most. It used Java, React, Python, Ollama, and a few other tools. The goal was to build a tutor that could help users analyze their communication and language skills.

After two days of slot-machine development, I realized the project had reached a dead end.

The problem was not only the code. It was also the current state of the models. I tried one open-source model and one mainstream model, and while both were excellent at turning sounds into words, they were much weaker at understanding the broader quality of human communication. Transcription was good. Real coaching and nuanced feedback were much harder.

At that point, I decided to do something interesting: I asked the LLMs to evaluate the code they had written.

Mistral: 6.5/10

Mistral gave the project an overall 6.5.

It was generous in a few areas:

- Architecture: 8/10

- Error handling: 8/10

It clearly appreciated the layered structure and the fallback logic.

The weak points were more revealing:

- Maintainability: 5/10

- Readability: 6/10

- Testing: 3/10

The frontend had become a monster. Almost everything ended up in App.tsx, which grew beyond 3,000 lines. I had explicitly asked to keep files under 300 lines, but that instruction did not survive contact with the agent.

So according to Mistral, the application was not terrible, but it was hard to read, hard to maintain, and barely tested.

In other words: a very human result.

Gemini: 4.0/10

Gemini was much harsher and gave the project an overall 4.0.

Maybe that is because it was less involved in the development and had to fix bugs left by the others. Or maybe it was simply more honest.

Its review was painful, but fair.

The backend received 6.0 for separation of concerns, but large classes and bloated services dragged the score down.

The frontend got 2.0.

That part was brutal:

App.tsxhad grown to almost 4,000 lines- there were thousands of lines of hardcoded values

- the

componentsfolder, which I had explicitly requested, was empty - too much JSON and configuration data lived directly in the frontend instead of a more appropriate place

Testing and reliability got 1.0, which honestly felt accurate.

Gemini also pointed out something important: the rules described in AGENTS.md were only partially followed. This is one of the most frustrating things about these tools. You can define rules, structure, and constraints, and they may still ignore them the moment the task becomes large enough.

On the positive side, it gave 8/10 for AI and innovation. The project ideas were good. The implementation discipline was not.

Claude: 5.5/10

Claude was one of the main contributors, so this was close to a self-evaluation.

Here is the short version of its review.

Overall score: 5.5/10

Backend: 7.5/10

Claude liked:

- the layered architecture

- centralized exception handling

- the AI fallback chain

- thread-safe in-memory state with

ConcurrentHashMap - basic file upload protections

- externalized configuration

It criticized:

PracticeServicefor doing too much- silent failures in some AI service fallbacks

- missing validation annotations on DTOs

- overly broad CORS configuration

That feels fair. The backend was not clean, but it was at least recognizable as software architecture.

Frontend: 3.5/10

This was the real disaster.

Main issues:

App.tsxhad 3,830 lines- it contained 68

useStatehooks - only two tiny components had been extracted

AppContext.tsxwas created and then abandoned- there was no ESLint, no Prettier, and no pre-commit hooks

Claude did recognize a few good parts:

api.tswas cleanuseRecording.tswas well written- the TypeScript types were fairly complete and aligned with the backend DTOs

But those were islands in a monolith.

Tests: 1/10

Only one empty smoke test existed for the whole codebase.

That is not a testing strategy. That is decoration.

And this is one of the clearest patterns I have seen with LLM-generated projects: they are happy to generate visible features first, but unless you force them very explicitly, testing is one of the first things they neglect.

Codex: no review without billing

Codex did not complete the evaluation because the token budget was exhausted.

I was offered a very modern choice: wait for a reset, or pay more.

You can imagine my answer.

Does AI loves monoliths? What I learned

I have to admit that I did not create 300 ultra-detailed instructions for the agents in this project. It was a prototype, and I did not want to spend too much time writing and validating rules before I even knew whether the idea was worth pursuing.

Still, the results were interesting.

The biggest lesson was how bad the frontend code became.

Coming from Angular, it feels almost unnatural to build everything as one giant monolith. But without strong instructions, LLMs often drift toward exactly that style: inline logic, mixed responsibilities, giant files, and almost no real component structure.

I noticed the same pattern in other stacks too. In Angular, they happily mix HTML, CSS, and TypeScript inline and keep growing the same class. In Python, if you do not force a multi-file structure, they keep adding more code into the same file forever.

A valid theory could be that monoliths are easier for the model. The context is all in one place, so it keeps appending code instead of designing structure.

That may be convenient for the LLM.

It is terrible for the human who has to debug the result later, especially when the agent runs out of tokens and leaves in the middle of a half-finished refactoring.

Skill issue? Are we Project Managers now?

Some people could argue that this is not a real problem, but simply a skill issue. In this project, I did not enforce strict rules or detailed constraints. In other projects, I use much more structured setups with agents, sub-agents, skills, and explicit guidelines.

I plan to repeat the same evaluation on larger and more important projects to see how much those constraints actually improve the outcome.

However, this raises a deeper concern.

If LLMs do not reliably internalize good engineering practices, and instead require carefully designed systems of rules, agents, and coordination layers, then our role starts to shift. We are no longer just developers writing software — we become orchestrators of virtual teams.

In other words, we start behaving like project managers.

And that is ironic, because for many engineers, that is one of the least appealing roles (suject for lot of jokes in our community).

Final verdict

After asking three LLMs to evaluate code they had helped write, the pattern was surprisingly consistent:

| Area | Score |

|---|---|

| Backend | 7 |

| Frontend | 3 |

| Testing | 1 |

| Maintainability | 5 |

That is the part I find most interesting.

LLMs are incredible accelerators. They can help you explore ideas, build prototypes, generate features quickly, and enter unfamiliar technical areas much faster than before.

But speed is not the same as quality.

In my experience, they are much better at creating momentum than at preserving structure. They are good at making progress look impressive. They are much less reliable when it comes to maintainability, testing, boundaries, and long-term clarity.

So, how good is LLM-generated code?

My current answer is: good enough to impress you, often too messy to trust, and very expensive to ignore once the project grows.

Or, to put it more bluntly: sometimes it looks like a normal human project — just created ten times faster, and often ten times bigger.